Recall

Sam Yen and Renate Fruchter

1998-2001

Anil Saboo, Pratik Biswas, Abhijit Mahindroo, and Renate Fruchter

2002-2005

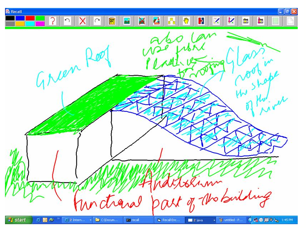

RECALL builds on Donald Schons concept of the reflective practitioner. It is a drawing application written in Java that captures and indexes the discourse and each individual action on the drawing surface. The drawing application synchronizes with audio/video capture and encoding through a client-server architecture. Once the session is complete, the drawing and video information is automatically indexed and published on a Web server that allows for distributed and synchronized playback of the drawing session and audio/video from anywhere at anytime. In addition, the user is able to navigate through the session by selecting individual drawing elements the user can jump to the part of interest. The participants can create free hand sketches or import CAD images and annotate them during their discourse. They have a color pallet, and a tracing paper metaphor that enables them to re-use the CAD image and create multiple sketches on top of it. A side bar contains the existing digital sketch pad pages that enable quick flipping or navigation through these pages. At the end of the session the participants exit and RECALL automatically indexes the sketch, verbal discourse and video. This session can be posted on the RECALL server for future interactive replay, sharing with geographically distributed team members, or knowledge re-use in other future projects. RECALL provides an interactive replay of sessions. The user can interactively select any portion of the sketch and RECALL will replay from that point on by streaming the sketch and audio/video in real time over the net.

The RECALL technology patented by Stanford University is aimed to improve the performance and cost of knowledge capture, sharing and re-use. It enables seamless, automatic, real-time video-audio-sketch indexing, Web publishing, sharing and interactive, on-demand streaming of rich multimedia Web content. It has been used in the AEC Global Teamwork course since 1999, as well as deployed in industry pilot settings to support:

- solo brainstorming, where a project team member is by him/her self and has a conversation with the evolving artifact, as Donald Schon would say, using a TabletPC augmented with RECALL and then sharing his/her thoughts with the rest of the team by publishing the session on the RECALL server.

- team brainstorming and project review sessions, using a SmartBoard augmented with RECALL

- best practice knowledge capture, where senior experts in a company, such as designer, engineers, builders, capture their expertise during project problem solving sessions for the benefit of the corporation.

Yen, S., Fruchter, R., Leifer, L., Capture and Analysis of Concept Generation and Development in Informal Media, ICED 12th International Conference on Engineering Design, Munich, Germany, August 1999.

Fruchter, R. and Yen, S., RECALL in Action, Proc. of ASCE ICCCBE-VIII Conference, ed. R. Fruchter, K. Roddis, F. Pena-Mora, Stanford, August 14-16, 2000, CA.1012-1021.

Fruchter, R., Reiner, K., Yen, S., and Retik, A., KISS: Knowledge and Information Slider System, Proc. of International Conference on Construction Information Technology, June 28-30, 2000, Reykjavik, Iceland.

Fruchter, R., Degrees of Engagement, International Journal of AI & Society, 2005, Vol 19, pp 8-21.

Fruchter, R., (2006)The Fishbowl: Degrees of Engagement in Global Teamwork, I.F.C. Smith ed., LNAI 4200 Intelligent Computing in Engineering and Architecture, Springer Verlag, 241-257.

TalkingPaper & A2D: Bridging the Analog and Digital Worlds

Renate Fruchter, Zhen Yin, Subashri Swaminathan, Manjunath Boraiah, and Chhavi Upadhyay

2004-to date

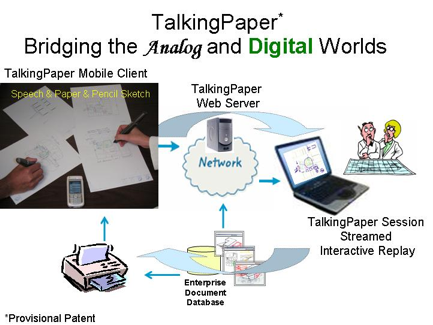

A decision delay can translate into significant financial and business losses. One way to accelerate the decision process is through improved communication among the stakeholders engaged in the project. Capturing, transferring, managing, and reusing data, information, and knowledge in the context it is generated can lead to higher productivity, effective communication, reduced number of requests for clarification, and a shorter time-to-market cycle. We formalized the concept of reflection in interaction during communicative events among multiple project stakeholders. This concept extends Donald Schons theory of reflection in action of a single practitioner. We model the observed reflection in interaction with a prototype system called TalkingPaper. It is a ubiquitous client-server collaborative environment that facilitates knowledge capture, sharing, and reuse during synchronous and asynchronous communicative events. TalkingPaper bridges the paper and digital worlds. It transforms the analog verbal discourse, annotated paper corporate documents, and the paper and pencil sketches into indexed and synchronized digital content that is published on and streamed on-demand from a TalkingPaper Web server. The TalkingPaper sessions can be accessed by all stakeholders for rapid knowledge sharing and decision-making.

Fruchter, R., Swaminathan, S., Boraiah, M. and Upadhyay, C. (2007) Reflection-in-Interaction AI&Society Journal Vol 22 nr. 2 November 2007, 211-226.

V2TS: Voice to Text and Sketch

Yuliya Tarneshenko, Pratik Biswas, and Renate Fruchter

2002-2003

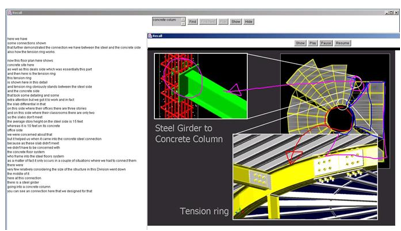

Speech is a fundamental means of human communication. Project teamwork (e.g., design, construction, manufacturing, etc.) are social activities. We argue that designers and builders generate and develop concepts through dialogue. These communicative events are typically not captured. Consequently, knowledge transfer and reuse opportunities are missed. Verbal communication provides a very valuable indexing mechanism. Keywords used in a particular context provide an efficient and precise search criteria. We developed the V2TS (Voice to Text and Sketch) prototype that processes the audio data stream captured by RECALL during the communicative event in the following way:

- feed the audio file to a speech recognition engine that transforms voice-to-text

- process text and synchronize it with the digital audio and sketch content

- save and index recognized phrases

- synchronize text, audio, sketch during replay of session

- keyword text search and replay from selected keyword, phrase or noun phrase in the text of the session.

i-Gesture

Pratik Biswas and Renate Fruchter

2003-2004

Gestures can serve as external representations of abstract concepts which may be otherwise difficult to illustrate. Gestures often accompany verbal statement as an embodiment of mental models that augment the communication of ideas, concepts or envisioned shapes of products. A gesture is also an indicator of the subject and context of the issue under discussion. We argue that if gestures can be identified and formalized they can be used as a knowledge indexing and retrieval tool, they can prove to be useful access point into unstructured digital video data. We developed a methodology and a prototype, called i-Gesture, that allows users to

- define a vocabulary of gestures for a specific domain,

- build a digital library of the gesture vocabulary, and

- mark up entire video streams based on the predefined vocabulary for future search and retrieval of digital content from the archive.

Biswas, P. and Fruchter, R., (2007) Using Gestures to Convey Internal Mental Models and Index Multimedia Content, AI&Society Journal Vol 22 nr. 2 November 2007, 155-168.

i-Dialogue

Zhen Yin and Renate Fruchter

2003-2006

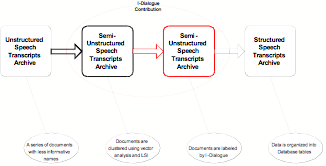

Speech is a fundamental means of human communication. Design and construction are social activities. We argue that designers and builders generate and develop concepts through dialogue. These communicative events are typically not captured. Consequently, knowledge transfer and reuse opportunities are missed. Our objective is to capture and mine rich, contextual, social communicative events for further knowledge reuse. We developed a methodology and prototype called i-Dialogue that: (1) captures the knowledge generated during informal communicative events through dialogue, sketching and gestures in the form of unstructured digital design knowledge corpus, (2) adds structure to the unstructured digital knowledge corpus, and (3) processes the corpus using an innovative notion disambiguation algorithm in support of knowledge retrieval. The evaluation experiments with i-Dialogue were performed in a testbed of design-construction projects.

This research builds on Information Retrieval theory and two knowledge management technologies, RECALL [Fruchter and Yen, 2000] and I-Gesture [Biswas and Fruchter, 2005], which were developed in Project Based Learning lab at Stanford University.

Information retrieval supports queries of the unstructured or structured repositories and retrieves interesting results. Key research questions in this field include: (1) how to find the query result, and (2) how to quantify the similarity among documents in order to assist users exploration of a large archive of unstructured multi-media, data collection.

Yin, Z., and Fruchter, R., (2007) I-Dialogue: Information Extraction from Informal Discourse AI&Society Journal Vol 22 nr. 2 November 2007, 169-184.

DiVAS

Pratik Biswas, Zhen Yin, and Renate Fruchter

2003-2006

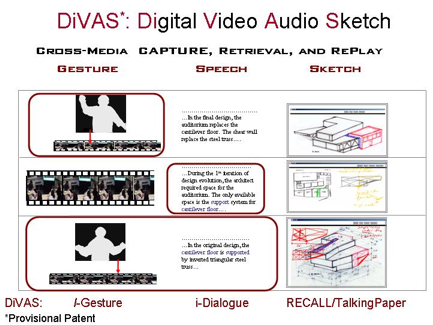

People generate and develop concepts in informal settings through gesture language, verbal discourse, and sketching. Current knowledge capture and reuse solutions are unable to utilize the relevance embedded in these multiple multimodal streams of communication. As a result, most of the archived content becomes hard to understand and reuse. We developed the DiVAS (Digital Video-Audio-Sketch) system for cross-media knowledge capture, search, retrieval and re-use of rich, unstructured, multimedia digital content stored in large corporate repositories. This is achieved through seamless transformation of analog activities such as gestures, discourse, and sketching that take place during creative and informal communicative events among stakeholders engaged in building projects, into integrated digital video-audio-sketch episodes. DiVAS presents a cross-media semantic analysis and data mining methodology of indexed digital video-audio-sketch footage that captures the creative human activities of concept generation and problem solving. Together, they provide a macro-micro index to large enterprise archives of rich, multimedia, and unstructured content. Knowledge re-use is facilitated through contextual exploration and understanding of the rich content that is retrieved.

Fruchter,R., Biswas, P. and Yin, Z., (2007) DiVAS: Digital Video Audio Sketch a Prototype for Cross-Media Capture, Sharing, and Reuse of Rich Contextual Knowledge, Proc. Of ASCE Conference on Computing in Civil Engineering, Soibelman L. and Akinci. B. eds., CMU, Pittsburgh, July 2007. 322-329.